Intelligence Satisfaction Score (ISAT)

An industry-first framework to measure the effectiveness of an intelligent virtual assistant

An industry-first framework to measure the effectiveness of an intelligent virtual assistant

Only 2% of users share feedback about their experience with a virtual assistant

Useful but does not say anything about queries resolved, false positives, confidence threshold, etc.

Standard market metrics such as retention rate, engagement, avg time spent, etc. are not applicable for virtual assistants

Complex multi-turn conversations can have various sentiments that make it difficult to analyze

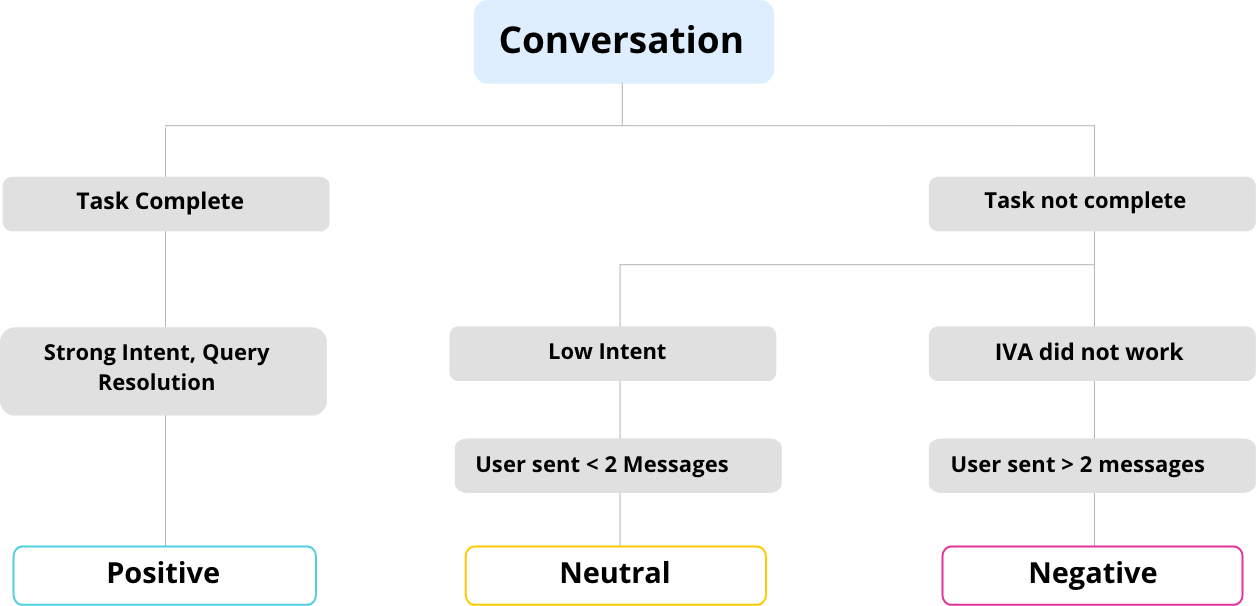

Steps to Arrive at the ISAT for a given virtual assistant or chatbot

Analyzing Positive, Negative and Neutral ISAT Conversations

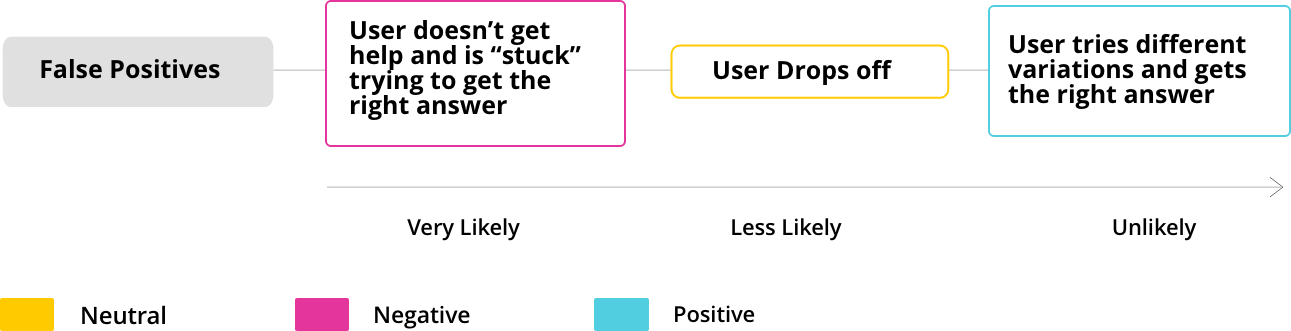

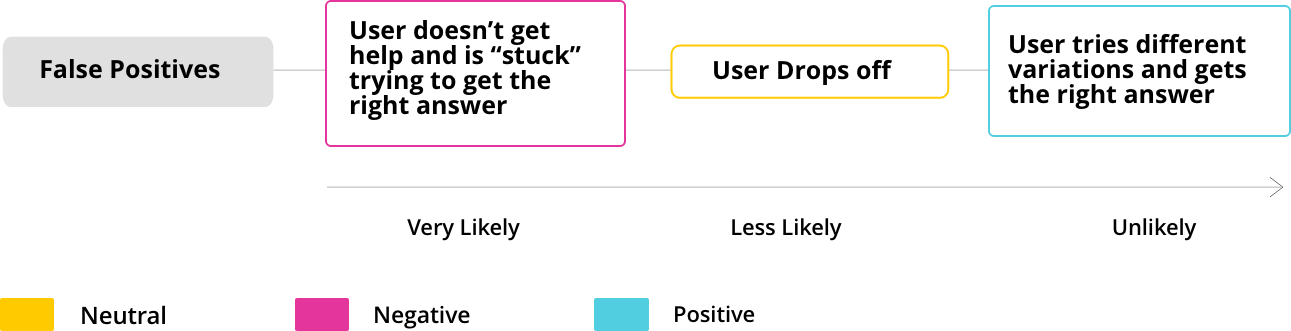

Conversations with a negative ISAT score are poor experiences that users undergo when the IVA fails to understand and resolve the user’s query. This model is validated by adding all conversations that had a user repetition (UR), bot repetition (BR), and cuss words (CW).

| UR | BR | CW | Total Negative Conversations |

|---|---|---|---|

| 6.0% | 3.0% | 2.0% | 11.0% |

| 1.0% | 0.0% | 0.0% | 1.0% |

| 4.0% | 2.0% | 2.0% | 8.0% |

| 14.0% | 3.0% | 3.0% | 17.0% |

| 1.0% | 1.0% | 1.0% | 2.0% |

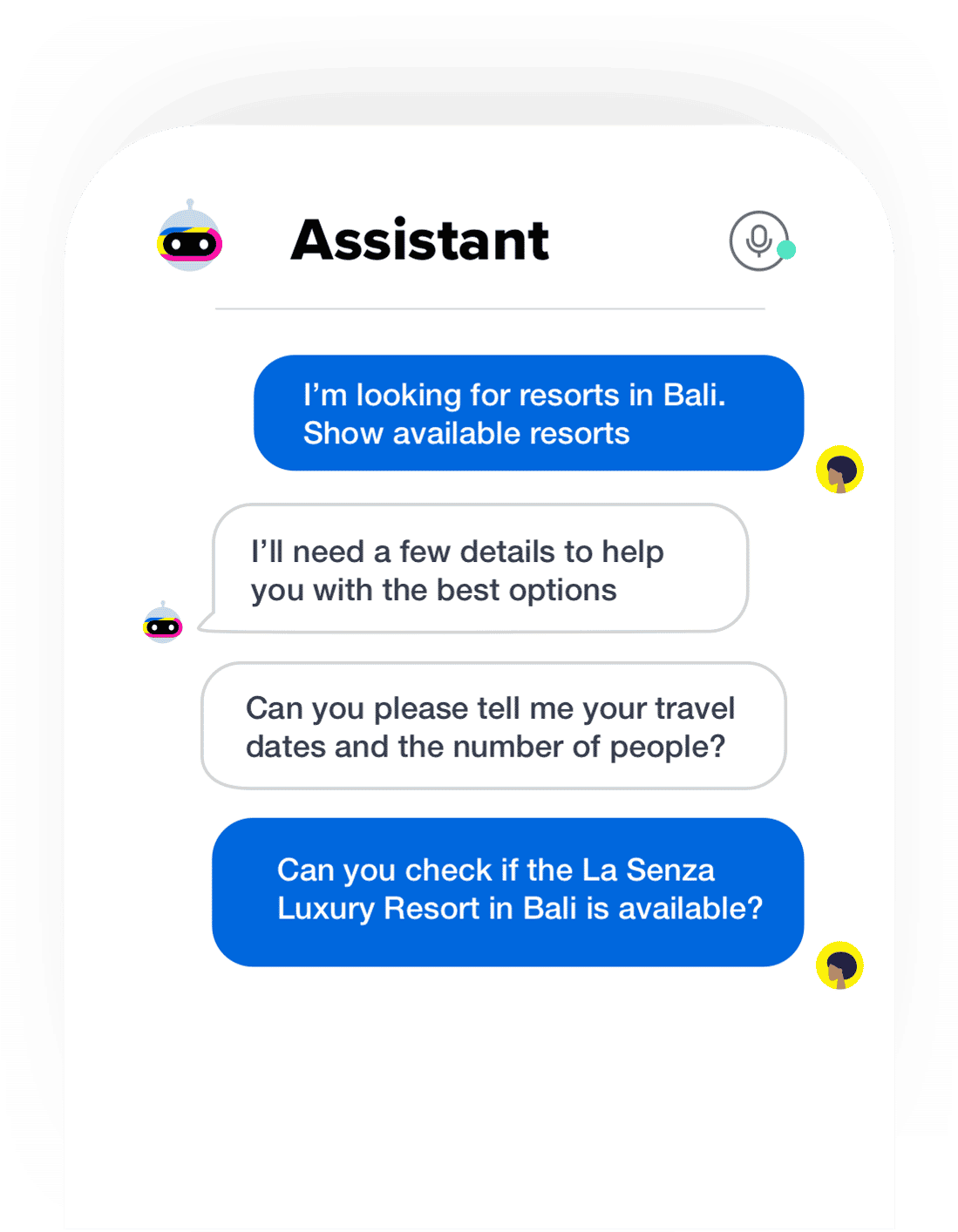

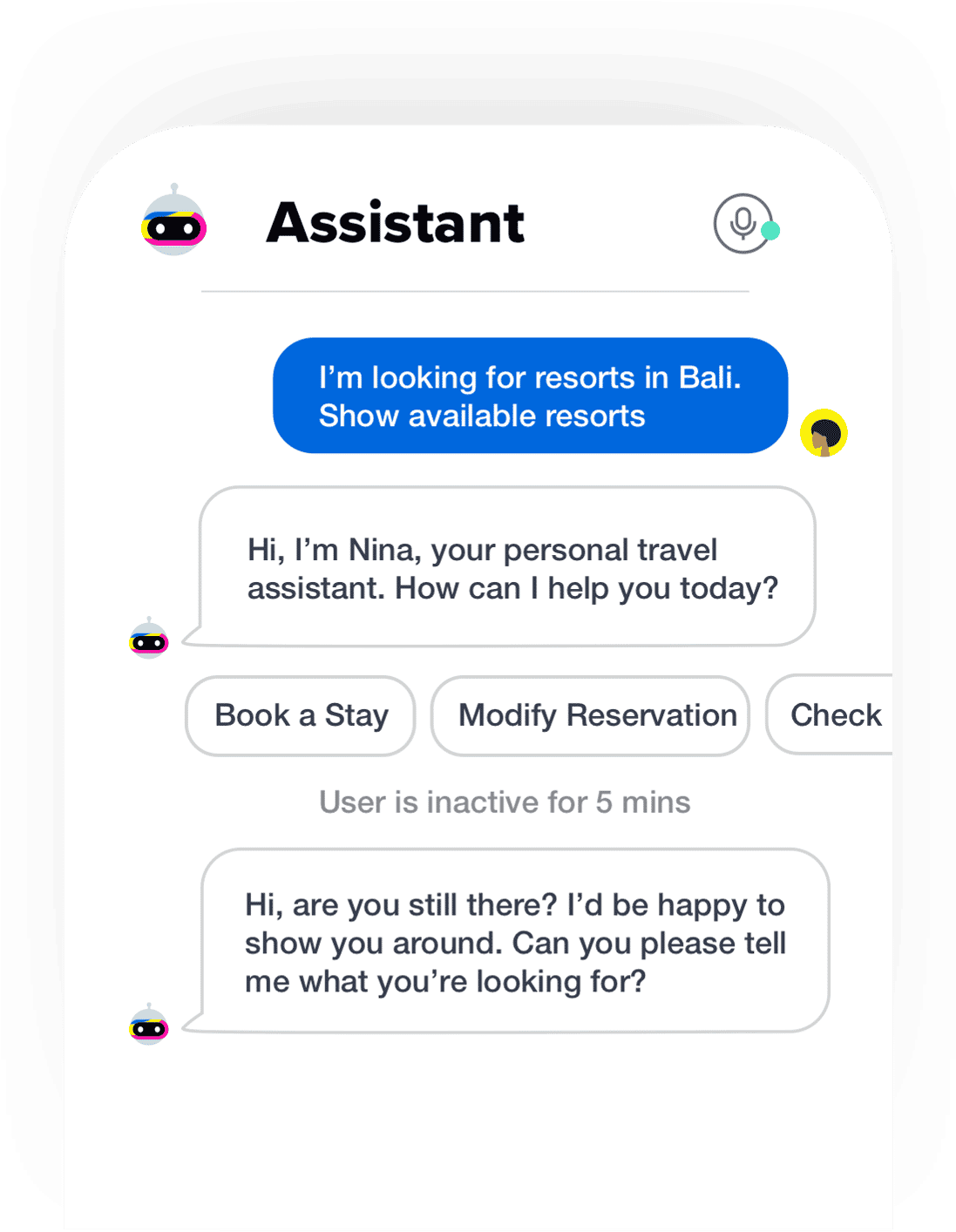

When the IVA fails to recognize the real intent of the user, it sends a wrong response causing False positives, thereby affecting the user experience negatively.

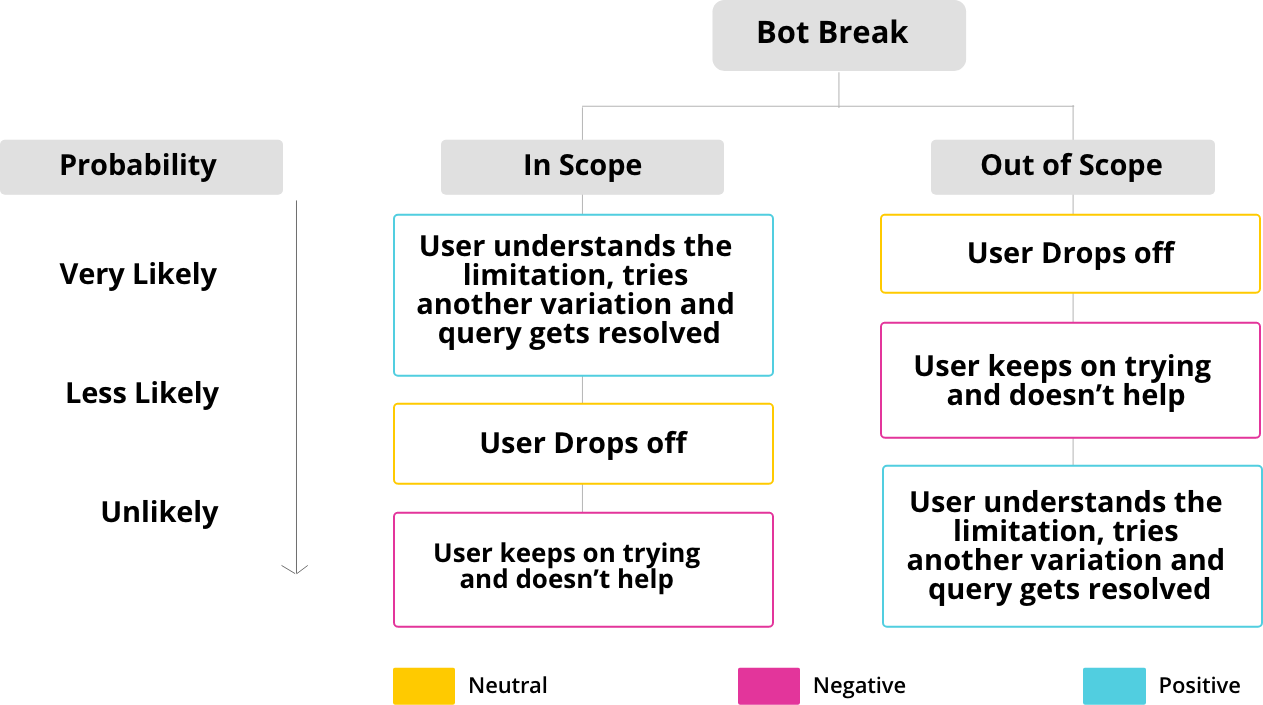

An IVA ‘breaks’ when it is unable to recognize the intent of the user. An IVA designer configures a response to be sent to the user in such a scenario to convey the context and scope of the IVA.

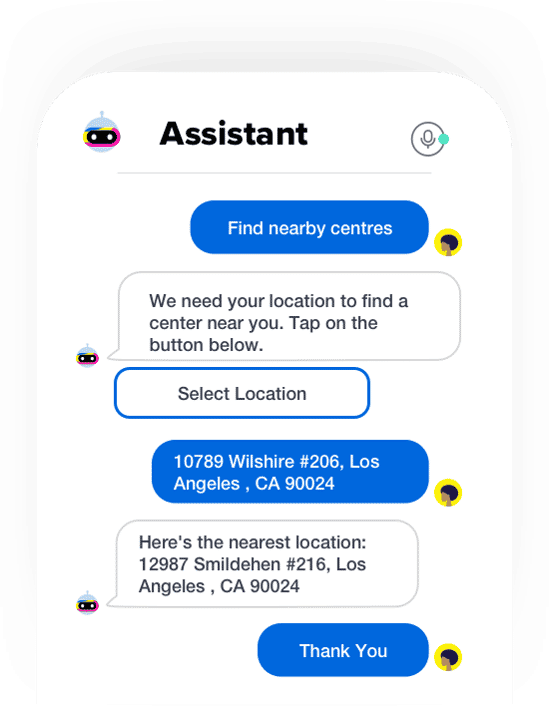

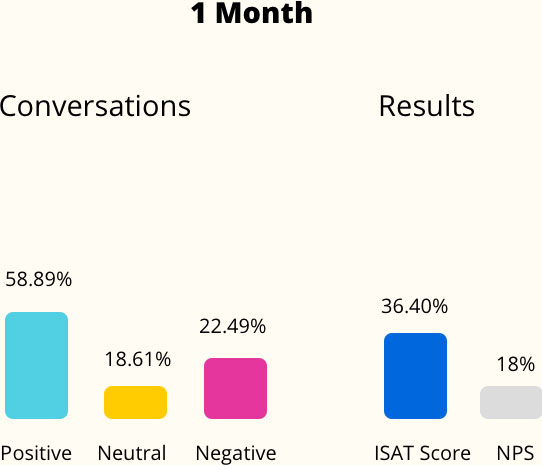

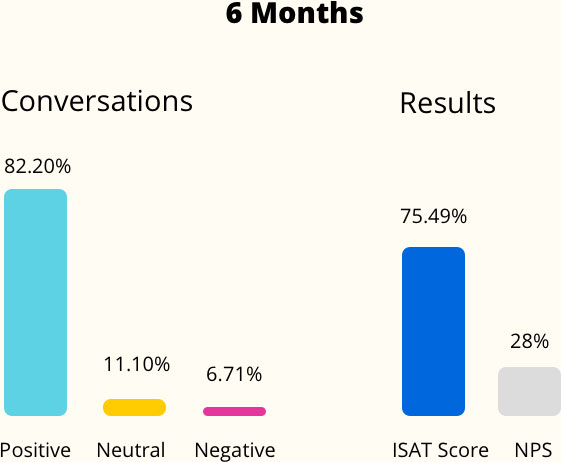

For one of the world’s largest insurance companies, we used the ISAT as a benchmark to improve the quality of the IVA, thereby improving the overall NPS

“Our customers often contact us with the same kind of requests. Using Haptik, we built Zuri and we have been able to achieve greater business efficiency and 70% end-to-end query resolution. In addition to the positive response from our customers, Zuri has helped us to offer 24x7 support to complement our HelpPoint timings and ensure we are there when our customers need us the most.”

— Mark Cady, Head of Operations, Zurich International

Asia Pacific | EMEA | North America | enterprise@haptik.ai